Pragmatic CS #8 Floating Point Arithmetic, JSON parsing, REST misunderstood, efficient cache-oblivious algorithm

Bringing together Graph Theory, Geometry and Floating Point Arithmetic

Using Graph Theory for Correction of Floating-point Imprecision in Computation Geometry

Floating point arithmetic:

a + (b - a)does not necessarily equalb. It might be equal, or it might be off by just a little, where “little” is relative to the larger of the two numbers. This means that when we compare two floating point numbers, we cannot do a precise comparison, we have to ask whether they differ by less than some epsilon value.

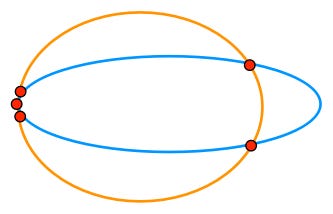

Above, there should only be three points of intersection – one on the left and two on the right. An error-correcting algorithm can treat it as a graph, and decide which edges to include or exclude.

To learn more about floating point arithmetic, this is a good resource covering the implications of using different rounding strategies for the basic operations, methods of measuring rounding error, and how understanding it is important for the design of computer systems.

REST is designed to be efficient for large-grain hypermedia data transfer.

Fielding came up with REST because the web posed a thorny problem of “anarchic scalability,” by which Fielding means the need to connect documents in a performant way across organizational and national boundaries. The constraints that REST imposes were carefully chosen to solve this anarchic scalability problem. Web service APIs that are public-facing have to deal with a similar problem, so one can see why REST is relevant there. Yet today it would not be at all surprising to find that an engineering team has built a backend using REST even though the backend only talks to clients that the engineering team has full control over.

Fielding’s original dissertation which proposed REST, was ironically about how much one-size-fits-all software architectures suck, and how you can better pick a software architecture appropriate for your needs. It included a taxonomy of alternative architectural styles that one could use for networked applications.

Building a high-performance JSON parser

Allocations affect performance - garbage collector might be fast to allocate and efficient to collect, but not allocating will always be faster.

When dealing with data, allocations can be the biggest performance cost of an algorithm. O(n) allocations—Per token or per byte—can be the limiting constraint on an algo, you can never go below that floor.

API design influences performance. The

encoding/json.DecoderAPI requires allocations as returning a primitive value via an interface causes it to escape to the heap — it effectively becomes a pointer to the value. This includes strings.Careful attention to the per byte and per token overheads delivered a JSON decoder that performs 2-3x faster than

enconding/json.Decoder.Tokenand 8-10x faster with the alternativeNextTokenAPI.O(n) functions per bytes of input are the second limit. Optimising the hot path to convert per byte into per token/line/message/etc batches is key to avoiding function call overhead.

van Emde Boas layout as an efficient cache-oblivious algorithm

If we pack the the BST in breadth-first order, we could potentially have a situation where almost every vertex in a BST search path, except for the first few, lies in a different page, and thus we’ll have to use O(logN−logB) page accesses. The van Embde Boas layout is a clever way of ordering the vertices of a binary search tree in a recursive, fractal-like manner such that each page access will fetch the next few vertices that will be queried, so that the next few accesses will be contained within that page.