Pragmatic CS #7 Reverse engineering Snapchat, teaching neural networks physics, domain-specific hardware accelerators, ego graphs

Can your neural network handle all that chaos?

Picture a swinging pendulum, moving back and forth in space over time. Now look at a snapshot of that pendulum. The snapshot cannot tell you where that pendulum is in its arc or where it is going next. Conventional neural networks operate from a snapshot of the pendulum. Neural networks familiar with the Hamiltonian flow understand the entirety of the pendulum's movement—where it is, where it will or could be, and the energies involved in its movement.

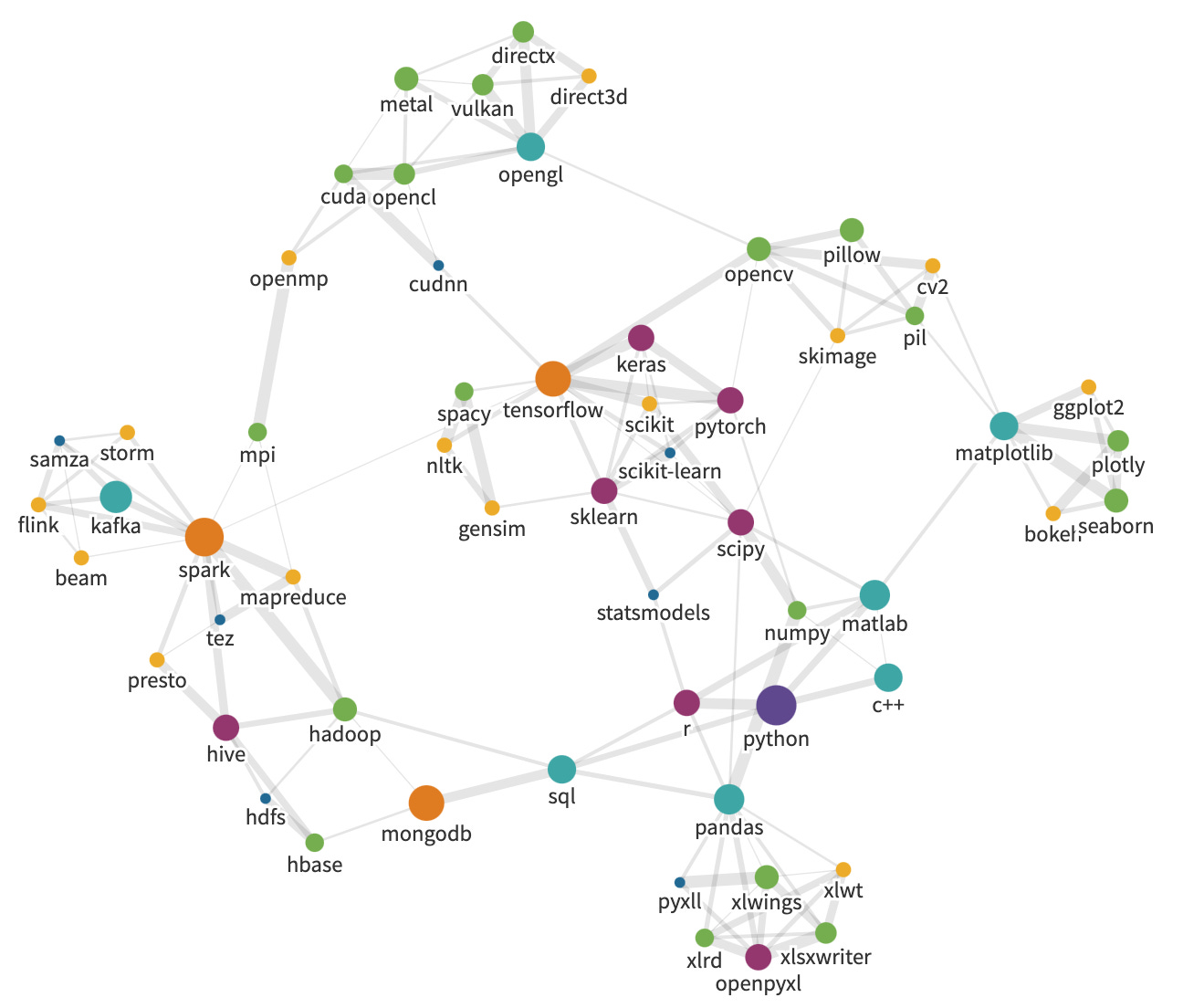

Creating Ego Graphs from Google Search Autosuggestions

An ego graph is the graph of all nodes that are less than a certain distance from the node in question.

The weight of the edge from term A and term B is the ranking in range 1 to 5 from the autosuggestion list. To make the graph undirected, the weights are summed in both directions to get an edge weight between 1 and 10. The distance of each edge is then simply 11-weight, which gives a minimum distance of 1 between terms.

Domain Specific Hardware Accelerators

Domain-specific accelerators exploit these main techniques for performance and efficiency gains:

Data specialization: Specialized operations on domain-specific data types can do in one cycle what may take tens of cycles on a conventional computer. Specialized logic to perform an inner-loop function gains in both performance and efficiency.

Parallelism: High degrees of parallelism, often exploited at several levels, provide gains in performance. To be effective, the parallel units must exploit locality and make very few global memory references or their performance will be memory bound.

Local and optimized memory: By storing key data structures in many small, local memories, very high memory bandwidth can be achieved with low cost and energy. Access patterns to global memory are optimized to achieve the greatest possible memory bandwidth. Key data structures may be compressed to multiply bandwidth. Memory accesses are load-balanced across memory channels and carefully scheduled to maximize memory utilization.

Reduced overhead: Specializing hardware eliminates or reduces the overhead of program interpretation.

Modifying the underlying algorithm is usually needed in order to achieve high speedups and gains in efficiency from specialized hardware. Existing algorithms are highly tuned for conventional general-purpose processors, and thus rarely optimal for a specialized solution.

The algorithm and hardware must be codesigned to jointly optimize performance and efficiency while preserving or enhancing accuracy.

The first part of this sequel was about the obfuscation techniques used by Snapchat, and the sequel is about how to bypass all of it…